Project Case Study

GPT-Powered

Internal Chatbot

with RAG

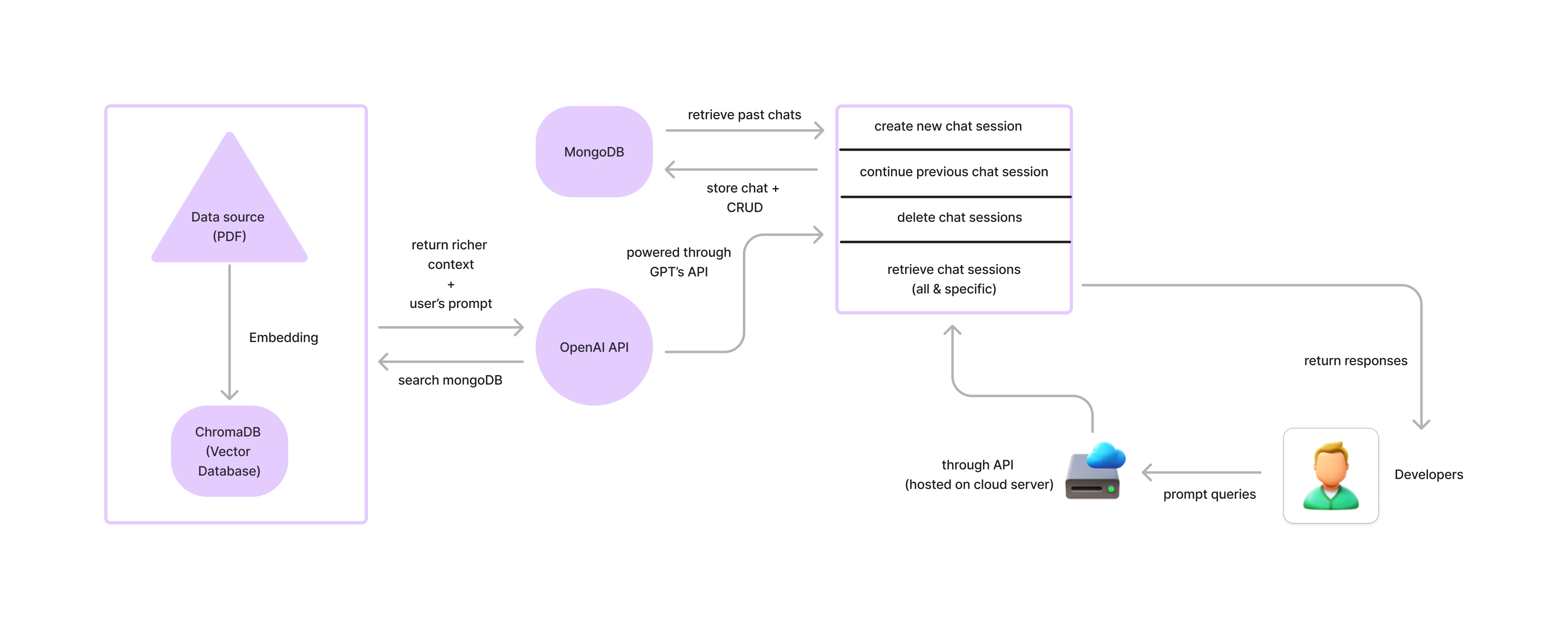

A retrieval-augmented generation system built for a Malaysian tech startup — enabling employees to query internal PDF documentation through a context-aware, session-persistent chatbot backed by GPT-4.

Case Study

Problem & Solution

The Problem

Staff at a Malaysian tech startup spent significant time repeatedly explaining what the company does — to new hires, potential clients, and partners. There was no centralised, always-available source of truth for company FAQs and internal knowledge.

The Solution

A session-persistent chatbot backed by the company's internal documentation. Employees and clients can query it naturally, receive grounded answers from the knowledge base, and follow up with full context preserved — eliminating repetitive manual explanations and onboarding friction.

Architecture

System Diagram

← scroll to pan →

How It Works

The RAG Pipeline

PDF Files

Knowledge Base

Text Chunking

300 tok · 100 overlap

Embeddings

OpenAI API

ChromaDB

Vector Store

Retrieval

History-Aware

GPT-4

Response Generation

PDF Files

Knowledge Base

Text Chunking

300 tok · 100 overlap

Embeddings

OpenAI API

ChromaDB

Vector Store

Retrieval

History-Aware

GPT-4

Response Generation

Capabilities

Key Features

Persistent Session Memory

Every conversation is tied to a UUID session stored in MongoDB. Multi-turn context is preserved across API calls with full history retrieval.

History-Aware Retrieval

Follow-up questions are reformulated by the LLM before hitting the vector store, ensuring retrieved chunks are always contextually relevant.

Persistent Vector Embeddings

ChromaDB persists the embedding index to disk. The knowledge base survives restarts — no re-indexing required unless documents change.

Full Session CRUD API

REST endpoints for creating sessions, continuing chats, fetching history, listing all sessions, and deleting sessions cleanly.

Document-Grounded Answers

System prompt constrains the LLM strictly to indexed document content, eliminating hallucination outside the knowledge base.

Zero-Friction Knowledge Updates

Add PDFs to the /data directory and restart — chunking, embedding, and indexing happen automatically on server startup.

Interface

REST API

/chatCreate a new chat session and get the first response/chat/{session_id}Continue an existing conversation/session/{session_id}Retrieve the full message history for a session/sessionsList all active session IDs and descriptions/chat/{session_id}Delete a session and its chat historyStack

Technology

API Layer

RAG Framework

Vector Database

Language Model

Session Storage

Document Processing