Project Case Study

Semantic API

Discovery Platform

A semantic search system that lets developers find API documentation by describing what they need — built for a major Malaysian financial institution.

Case Study

Problem & Solution

The Problem

Developers navigating a large financial institution's API catalogue had to scroll through pages of documentation or rely on exact keyword matches to find what they needed. This created friction, slowed development velocity, and led to APIs being underutilised simply because they were hard to discover.

The Solution

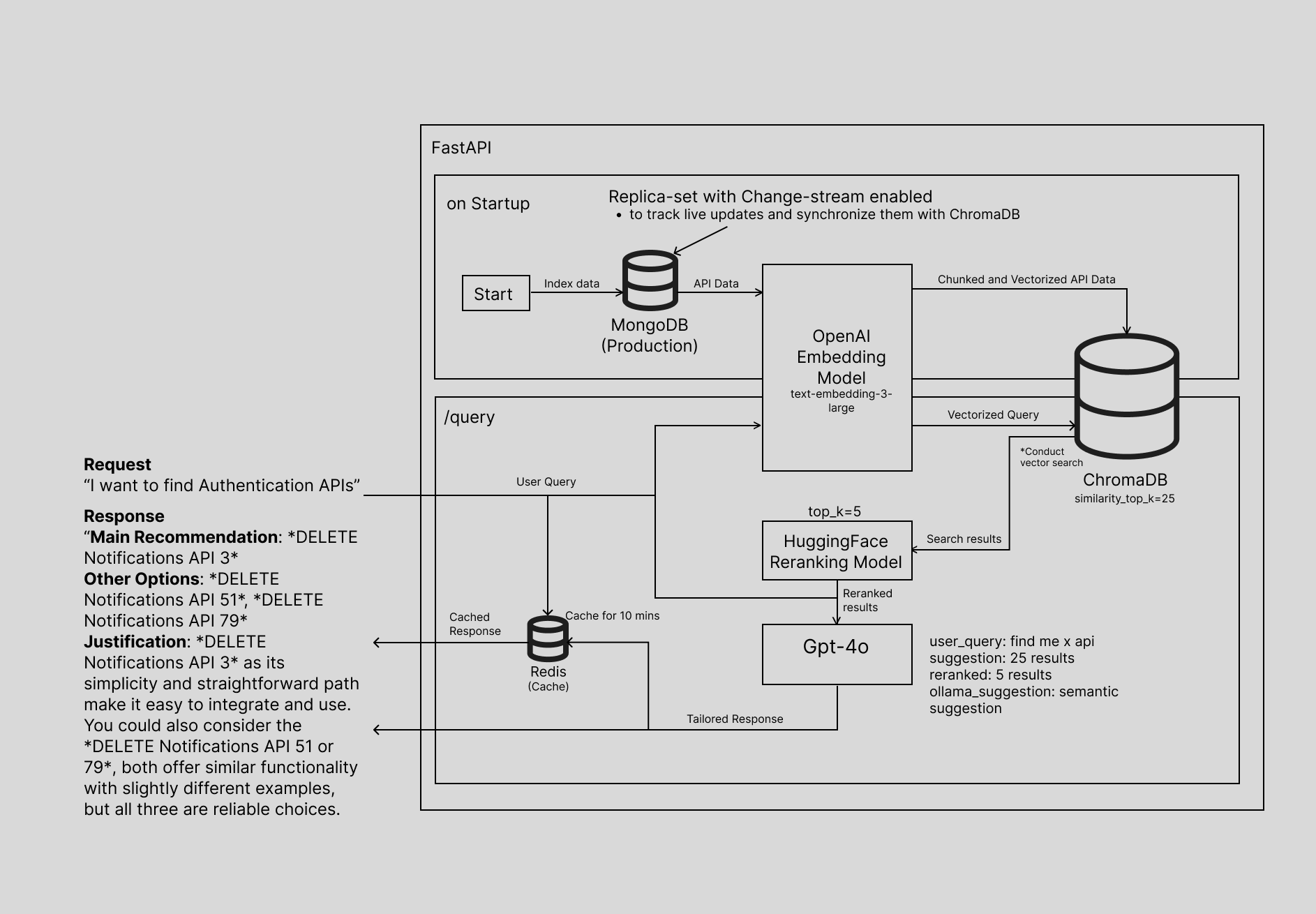

A semantic search system that understands developer intent. Ask “I want to find Authentication APIs” and the system returns a ranked recommendation with alternatives and a plain-English justification explaining why each result relates to the query — no exact keywords needed.

Architecture

System Diagram

← scroll to pan →

How It Works

The Search Pipeline

MongoDB

API Data Source

OpenAI Embeddings

text-embedding-3-large

ChromaDB

Vector Store

HuggingFace Reranker

25 → top 5 results

GPT-4o

Response Generation

Redis Cache

10-min TTL

MongoDB

API Data Source

OpenAI Embeddings

text-embedding-3-large

ChromaDB

Vector Store

HuggingFace Reranker

25 → top 5 results

GPT-4o

Response Generation

Redis Cache

10-min TTL

Capabilities

Key Features

Semantic Intent Search

Find APIs by describing what you need in plain English, not exact keywords. The system vectorizes the query and performs similarity search across the full API catalogue.

Contextual Recommendations

Returns a main recommendation plus alternative options, each with a plain-English justification explaining exactly why it matches the query.

HuggingFace Reranking

Vector search retrieves 25 candidates; a HuggingFace reranking model reranks them to the top 5 most relevant results before passing to the LLM.

Live Data Sync

MongoDB replica-set with change-stream enabled automatically keeps ChromaDB in sync as API documentation is updated — no manual re-indexing.

Redis Response Caching

Identical queries are served from cache for 10 minutes, reducing LLM calls and improving response latency for repeated searches.

GPT-4o Grounded Generation

Responses are grounded strictly in retrieved API data. The LLM formats a tailored answer with ranked suggestions and rationale, not hallucinated content.

Stack

Technology

API Layer

Database

Embedding

Vector Store

Reranking

LLM & Cache